Generative Adversarial Network or GAN is a machine learning approach used for generative modelling designed by Ian Goodfellow and his colleagues in 2014. It is made of two neural networks: generator network and a discriminator network. The generator network learns to generate new examples, while the discriminator network tries to classify the examples as real or fake. These two networks compete with each other (thus adversarial) to generate new examples (fake) that look like real ones.

Overview:

- What is GAN

- Architecture of GAN

- Training of GAN

- Problems with GAN

- Applications of GAN

- Conclusion

What is GAN

GANs are a powerful generative model used for generating new data points. They are also useful when used with supervised, semi-supervised and reinforcement learning.

A generative model is a way of learning the data distribution of the dataset to generate new data points with some variations in them. Some of the examples of generative modelling are: Naive Bayes, Latent Dirichlet Allocation (LDA), Gaussian Mixture Model (GMM), etc.

A Generative Adversarial Network (GAN) is an unsupervised learning approach used for generating new synthetic examples that looks similar to the real examples. For example a GAN trained on human face images can generate new human faces that look so real that even a human observer finds it difficult to distinguish them from real human faces.

GANs are considered unsupervised learning models as there is no need for some explicit labels annotated by a human observer. The real/fake labels for the GAN images are generated automatically.

Want to learn about Autoencoders:

Architecture of GAN

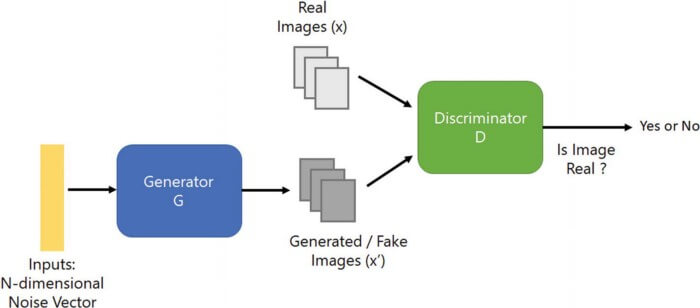

A simple GAN consists of two neural networks: a generator and a discriminator neural network. The generator network takes random noise and generates new synthetic images that look indistinguishable from real images. The discriminator network takes both the real images and the fake images that are generated by the generator neural network and tries to classify both of these images as real or fake.

There are different types of GANs and all consist of at least a discriminator and a generator. Some GANs also consist of multiple discriminators and generators, like MD-GAN (Multiple Discriminator Generative Adversarial Network), SGAN (Semi-Supervised Adversarial Network), etc.

Generator Neural Network

The generator neural network takes a fixed-length vector as the input and generates a fake sample that resembles the training dataset domain. This fix-length vector is drawn randomly from a Gaussian distribution and is used to seed the generative process.

This fix-length vector space is also referred to as latent space as it comprises latent variables. These latent variables consist of the compressed representation of the data distribution. This data distribution has high levels of concepts that can be seen in the data samples generated by the generator neural network.

Once the generator neural network is trained enough that it produces indistinguishable data samples. It is saved and used for generating new data samples.

Discriminator Neural Network

The discriminator neural network is a simple classification model. It takes the real data samples from the training dataset and fake data samples generated by the generator neural network, then predicts a binary class label to classify the data samples into real or fake.

Generally, the discriminator neural network is discarded after the training is over. Sometimes it is used in transfer learning as it has learned the features that can effectively be used in other tasks like classification.

Training of GAN

The training process of a Generative Adversarial Network is not the same as any other deep learning task, it’s quite different. In GAN both the discriminator and generator are trained together in an adversarial manner.

In every epoch, first, we train the discriminator neural network followed by the training of the generator neural network.

When we start the training process of the discriminator neural network, the generator neural network remains idle (not trained), meaning it is only forward propagated and no-backpropagation is done. The generator neural network generates a batch of fake samples from a random distribution, which along with real samples from the training dataset are given to the discriminator for classification as real or fake. The discriminator neural network is trained to get better in discriminating real or fake samples.

After the discriminator neural network is trained, we start the training of the generator neural network.

While the generator neural network is in the training process, the discriminator neural network remains idle. Now we take the fake samples generated while training the discriminator neural network, give them to the discriminator neural network and make predictions on them. We use these predictions to train the generator neural network and to better from the previous epoch in trying to fool the discriminator neural network.

In simple terms, we can say that the generator neural network is updated based on the performance of the discriminator neural network on how well the generated samples can trick or mislead the discriminator.

When the discriminator neural network successfully identifies the real and fake samples, there are fewer changes or updates made to the discriminator neural network, whereas the generator neural network gets large updates in its parameters.

The above scenario can be reversed, where the generator neural network can fool the discriminator neural network successfully. In this case, the generator neural network has to make fewer updates in its parameters as compared to the discriminator neural network.

Problems with GAN

GANs suffer from several problems. Some of the problems are discussed below:

- Mode collapse: GAN usually produces a wide range of outputs for every random noise given to the generator. In mode collapse, the generator produces a limited diversity of samples regardless of the random noise. In some cases, the generator produces the same output for every random noise.

- Non-convergence: The discriminator and generator model oscillates, destabilizes and sometimes never converges.

- Vanishing gradient: There should be a balance between the power of discriminator and generator neural network. If your discriminator is too powerful then the generator fails to train due to vanishing gradient problems.

Applications of GAN

Other than generating fake synthetic images, GANs are used in a wide range of applications which includes:

- Super-resolution

- Image denoising

- Image to image translation

- Next frame prediction

- Text to image translation

- 3D object generation

- Music generation

- Text generation

and many more.

Summary

In this post, we have gone through an overall understanding of the Generative Neural Network.

- A GAN is a generative model that uses unsupervised learning to generate new data samples.

- A GAN consists of two neural networks: generator and a discriminator, where the generator is used to produce new data samples that look like the real data samples.

Still have some questions, comment below and I will do my best to answer.