In the rapidly evolving landscape of artificial intelligence and machine learning, Generative Adversarial Networks (GANs) have emerged as a revolutionary approach to generating data that mimics real-world distributions. One intriguing development within this realm is the Conditional GAN, an extension of the classic GAN architecture that introduces the concept of conditioning. This blog post delves into the world of Conditional GANs, exploring what they are, their significance, advantages, disadvantages, and the myriad of applications that harness their potential.

Arxiv research paper: Conditional Generative Adversarial Nets

What is GAN

Generative Adversarial Network or GAN is a machine learning approach used for generative modelling designed by Ian Goodfellow and his colleagues in 2014. It’s made of two neural networks: a generator network and a discriminator network. The generator network learns to generate new examples, while the discriminator network tries to classify the examples as real or fake. These two networks compete with each other (thus adversarial) to generate new examples (fake) that look like real ones.

Read more: GAN – What is Generative Adversarial Network?

Conditional GAN

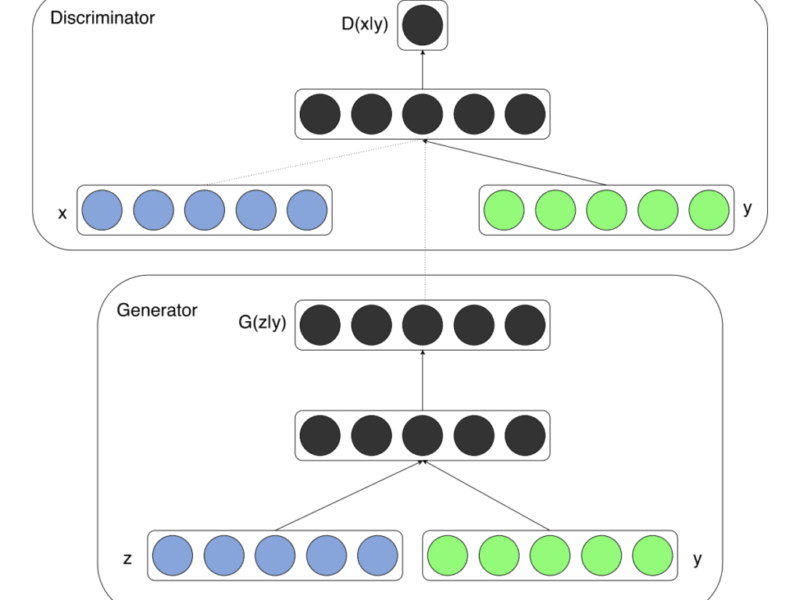

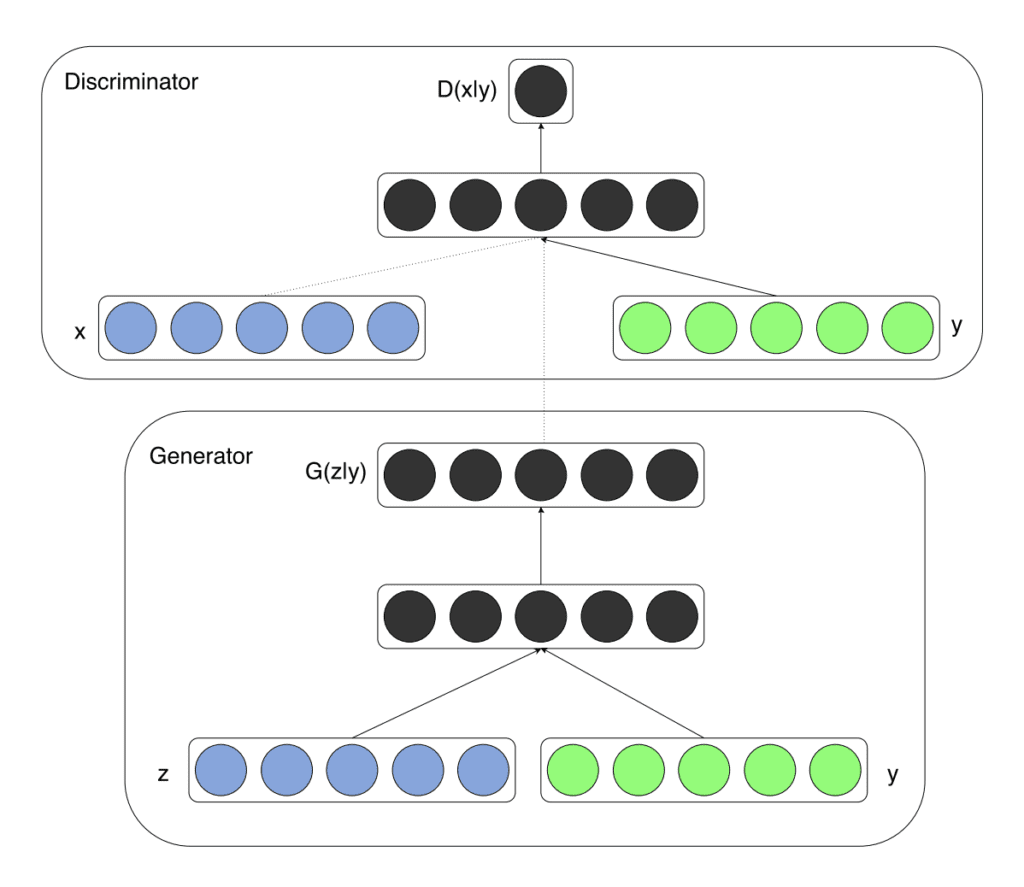

Conditional GAN, known as cGAN, is an extension of the traditional GAN framework introduced by Ian Goodfellow and his colleagues in 2014. While a standard GAN consists of a generator and a discriminator engaged in an adversarial training process to produce realistic data, a cGAN introduces a new element: conditioning. This means that the generator and the discriminator are given additional information, or context, guiding the generation process.

In mathematical terms, a cGAN is trained to generate data X conditioned on some auxiliary information Y. This extra information Y is typically represented as a label or a categorical description. The generator is not only fed a random noise vector z but also the conditional information Y, resulting in a more controlled and targeted data generation process. The discriminator, on the other hand, receives both the real data and the corresponding conditional information, enabling it to assess the authenticity of the generated samples in the context of that information.

Why Conditional GAN

Conditional GANs address a fundamental limitation of traditional GANs – lack of control over the generated data. By incorporating conditional information, cGANs empower users to influence and manipulate the generated samples in a meaningful way. This opens the door to a plethora of applications where context is crucial, ranging from image synthesis to style transfer, text-to-image generation, and even super-resolution tasks.

Advantages

- Controlled Generation: The primary advantage of cGANs is the ability to steer the generative process by providing additional information. This enables users to generate specific instances or variations of data based on the given conditions.

- Contextual Realism: Conditional information enhances the realism of generated data by incorporating context. This makes the generated samples more coherent and aligned with the specified conditions.

- Multi-Modal Generation: cGANs facilitate the generation of multiple modes of data within the same distribution. This is particularly useful in tasks where diverse outputs are desired.

Disadvantages

- Data Availability: cGANs rely on annotated or labelled data for conditioning. Obtaining such data can be challenging and time-consuming, limiting the applicability of cGANs in certain domains.

- Mode Collapse: Like traditional GANs, cGANs can suffer from mode collapse, where the generator produces a limited variety of samples regardless of the input conditions.

Applications

- Image-to-Image Translation: cGANs have been employed to translate images from one domain to another while preserving specific attributes. For instance, turning satellite images into maps or sketches into realistic images.

- Text-to-Image Generation: By conditioning the generator on text descriptions, cGANs can generate images that correspond to textual input. This finds applications in creating images from textual prompts or assisting in artistic endeavours.

- Super-Resolution: cGANs can be used to enhance the resolution of images, producing high-quality outputs from low-resolution inputs, by conditioning on the low-resolution image.

- Style Transfer: With conditional information representing style or artistic features, cGANs can transfer artistic styles from one image to another, enabling creative transformations.

- Data Augmentation: cGANs can generate additional data for training purposes, boosting the performance of machine learning models in scenarios with limited labelled data.

Conclusion

Conditional GANs represent a significant evolution in the GAN landscape, offering a powerful mechanism for incorporating context into the generative process. With the ability to generate data that aligns with specific conditions, cGANs have carved a niche in various applications, from artistic endeavours to practical problem-solving. While challenges such as data availability and mode collapse persist, ongoing research and innovations in the field are likely to overcome these limitations, further solidifying the role of conditional GANs in shaping the future of generative models.

Read More

- How to Develop a Conditional GAN (cGAN) From Scratch

- Vanilla GAN in TensorFlow

- GAN – Google Advance Course

- DCGAN – Implementing Deep Convolutional Generative Adversarial Network in TensorFlow

Do comment if you have any issues and follow me: